IIRC pip install torch also install libcudarc.so and other things with it too. conda install -c conda-forge cudatoolkit-dev -y 12 which nvccnvcc -version 1 echo CUDAHOME 12 export CUDAHOMECONDAPREFIXecho CUDAHOME GroundingDINO and Segment-Anything I was trying to install Grounded-Segment-Anything but somehow I cannot run it in GPUmode.

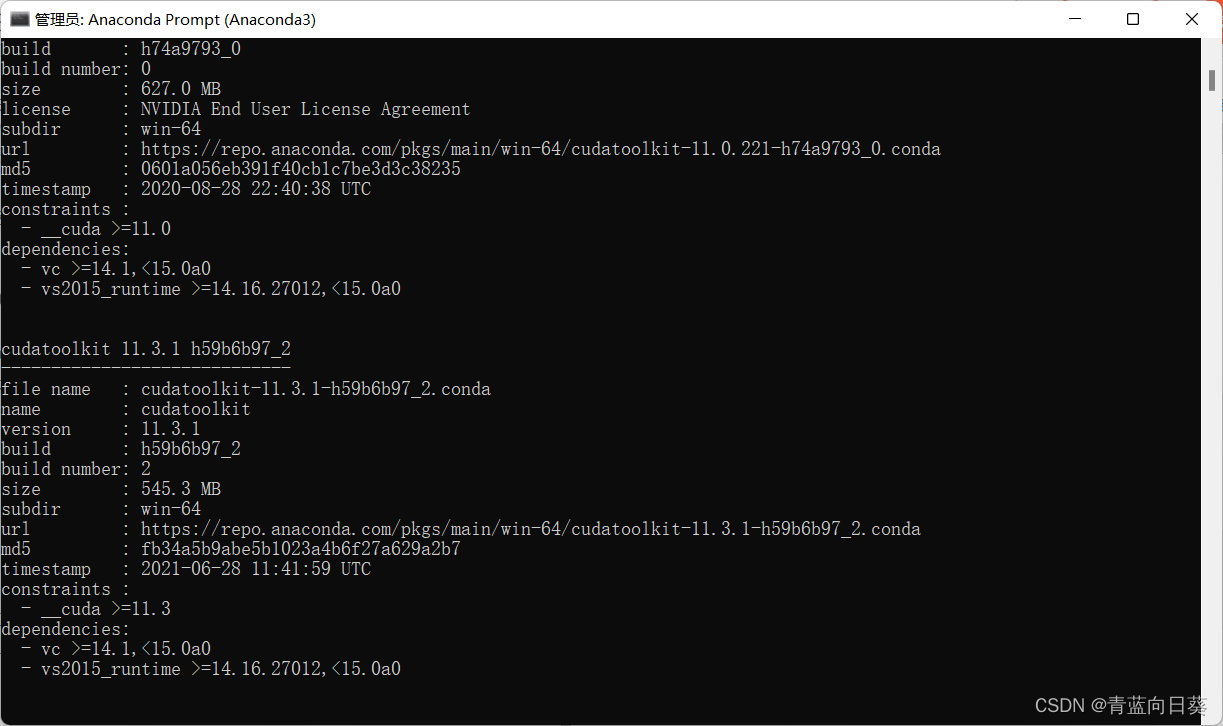

_ipyw_jlab_nb_ext_conf 0.1.0 p圓9h06a4308_1Īrgon2-cffi-bindings 21.2.0 p圓9h7f8727e_0īackports.functools_lru_cache 1.6.4 pyhd3eb1b0_0Ĭonda-package-handling 1.9. 2,009 4 20 33 I remember installed Pytorch via pip with incorrect Cuda version (10.1 vs 11) and it still worked. Workqueue Threading Layer Available : True List of accessible CPUs cores : 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15ĬFS Restrictions (CPUs worth of runtime) : NoneĬPU Features : 64bit adx aes avx avx2 bmi bmi2 It is very likely my situation is due to my inexperience since this is the first time I am doing this. To install this package run one of the following: conda install -c conda-forge cuda-compiler Description A meta-package containing tools to start developing and compiling a basic CUDA application. I also have Nvidia CUDA installed and can successfully build and run different examples. It is a fresh install of Ubuntu with fresh install of conda/numba. Is there a reason to prefer installing these from one of the channels over the other Know someone who can answer Share a link to this question via email, Twitter, or Facebook.

I would really appreciate it if anyone could help out.My apology for rehashing perhaps benign topic: Not being able to run numba on Ubuntu. cudatoolkit and cudnn are available on anaconda and conda-forge channels. The weird part is that in my base conda enviornment, _available() returns true. I did sudo ubuntu-drivers autoinstall to install the drivers and nothing changed. If I run the install command for nvidia-cuda-toolkit the nvidia-smi command stops working and when I test if cuda is being used with pytorch, I keep getting _available() as False. | 0 N/A N/A 1498 G /usr/bin/gnome-shell 10MiB |īut when I do nvcc -version it returns Command 'nvcc' not found, but can be installed with: sudo apt install nvidia-cuda-toolkit. | GPU GI CI PID Type Process name GPU Memory | Solving environment failed with initial Solution 3 Upgrade conda to the latest version conda install pytorch torchvision torchaudio cudatoolkit 10 What. Then, GPU packages are compiled, linked to cudatoolkit, and packaged, which is the reason you only need the CUDA driver to be installed and nothing else. Such a repository is known as a feedstock. The latter is packed in the cudatoolkit package, is made as a run -dependency (in conda's terminology), and is installed when you install GPU packages like PyTorch. The conda-forge organization contains one repository for each of the installable packages.

In order to provide high-quality builds, the process has been automated into the conda-forge GitHub organization. | 0% 37C P8 8W / 220W | 99MiB / 8192MiB | 0% Default | conda-forge is a community-led conda channel of installable packages. | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. This command creates a new conda environment called rapids-0.19 and installs the cuDF package and. When I enter all the commands I do nvidia-smi and I get this: | NVIDIA-SMI 520.61.05 Driver Version: 520.61.05 CUDA Version: 11.8 | blazingsql0.19 cudf0.19 python3.7 cudatoolkit11.0. I have Ubuntu 22.04 LTS and I followed these instructions: With the CUDA Toolkit, you can develop, optimize and deploy your applications on GPU-accelerated embedded systems, desktop workstations, enterprise data centers, cloud-based platforms and HPC supercomputers. To install TensorFlow, use the Conda package with dependency management. The NVIDIA CUDA Toolkit provides a development environment for creating high performance GPU-accelerated applications. I am trying to install Cuda 11.3 (since i understand this is the most stable one and any version after leads to compatibility issues). on Linux only requires installing the Cuda Toolkit 7.0 or newer versions.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed